Artifical Intelligence (AI) has undoubted positive potential to be used to enable completely new kinds of research, especially in areas that require the “analysis” of very large amounts of data. This is particularly so in fields as diverse as modelling environmental change, and medical diagnostics. However, I am shocked almost every day by the scale at which it is now used overtly to cheat (and seek to get an advantage over others) and to support downright laziness in academic research.

Many univeristies have introduced AI policies for students (both undergraduates and postgraduates) that focus largely on identifying permitted and illegal aspects of the use of AI, focusing especially on penalising perceived abuse thereof. All too often these policies fail sufficiently to recognise how it can indeed be used positively and constructively. What such policies also fail to recognise is the scale at which deceit was already widely practised in universities prior to the advent of AI (see my posts in 2010 on Univeristy Students Cheating and on plagiarism and the production of knowledges, and in 2021 On PhDs). As with so many digital technologies, they serve to accentuate existing aspects of human behaviour. With the massification and grade inflation that has occurred in higher education over the last quarter of a century, it is scarcely surprising that some (perhaps most) people will use any means at their disposal to gain the highest level of certification with the least amount of effort.

What is deeply worrying is the speed at which the use of AI is transforming – and possible destroying – traditional values of academic integrity and labour. Two recent examples highlight the scale of the challenge:

- Prize nominations. I have recently been on several boards reviewing nominations/applications for prizes. Increasing numbers of these appeared suspicious to me, and a quick check with a variety of AI detectors indicated high probabilities that they were indeed produced through the use of AI. Examining some of the entries for international awards ceremonies over the last couple of years, also suggests that several of the winning entries were produced through the use of AI, and that some of the evidence adduced therein was not based on physical reality. The reasons are obvious, with potential winners believing that they can gain an advantage through the use of such technologies.

- Research proposals. In the last couple of years, an increasing number of proposals I receive from prospective postgraduate or post-doc applicants are clearly produced using AI. This is deeply concerning, not least because it provides no evidence that an applicant is indeed able to undertake independent research, and were such applicants to be accepted there would probably be very real problems during the research process. However what has provoked me to write this short post is that one such applicant seemed to express surprise that I should actually want to receive an application written only by a human!

Some members of review panels clearly do not mind if AI has been used to enhance a proposal, but I remain very concerned for four main reasons:

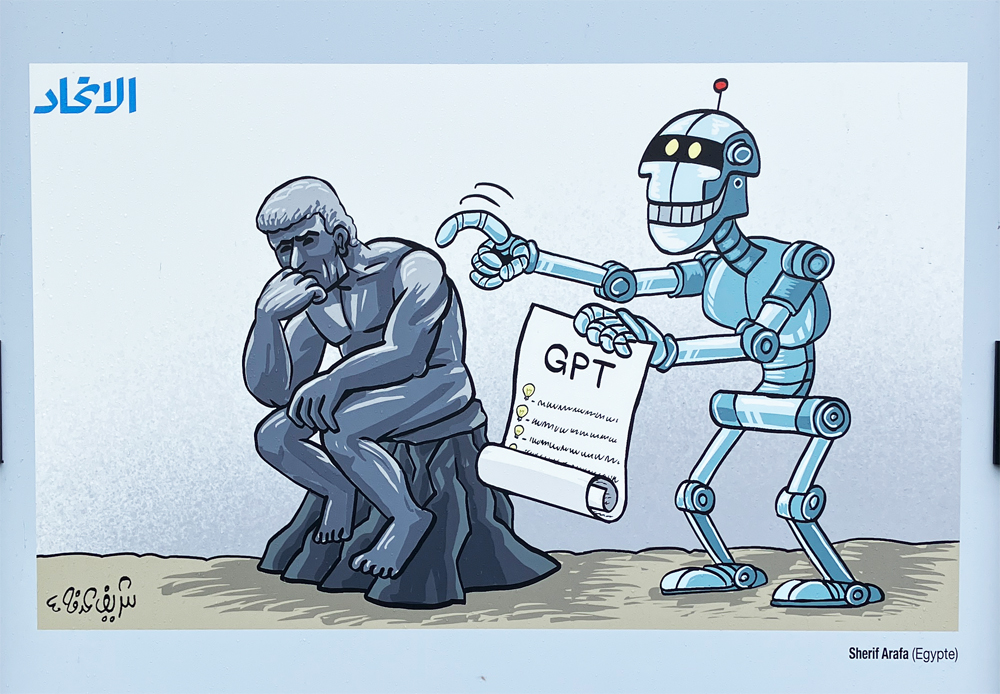

- Above all, extensive reliance on AI to design research (and presumably therefore also to undertake and produce it) will take away the ability of human researchers to think for themselves and create new ideas. This is already happening, but any loss of this ability is deeply problematic, not least since it increasingly limits our individual and collective ability to be resilient and solve new challenges in the future. I fundamentally disagree with arguments that suggest this does not matter because it will enable our brains to concentrate on other, higher level, functionalities.

- Second, though, AI is only as good as the data drawn upon by its algorithms. Such data, by definition, always comes from the past, and is biased. Hence, it cannot be truly innovative. All it does (at present) is reconfigure existing knowledge in new ways. To be sure, this can be interesting, but randomness (not least in our genetic makeup – although genetic drift is now seen as being less random that was previosuly thought) and serendipity are key elements of true innovation and creativity. These are what has enabled us to survive as a race, and if we lose them we will lose not only our souls but also our physical ability to function.

- Third, all too oftenpeople using AI do so because they find thinking too difficult and/or they are lazy. They want a quick solution without the effort. Yet if we do not use our brains they will atrophy; if we do not draw on our memories, we will forget how to use them. Digital dementia is already a significant problem, but it will become very much more so in the future if we do not continue to exercise our minds. We must cherish real creative and innovative research by humans. This has always been tough; excellence does not come easy. However, it is rewarding. We must do all we can to encourage and reward real and high quality human research. If not, the mediocre and trivial will increasingly come to dominate our enquiry.

- Fourth, it raises real problems for institutions and the management of research. Focusing on penalising cheating is a sign of failure. What we need to do is to encourage as many people as possible to think new thoughts for themselves. The mundane can indeed be left to those who enjoy trawling through the slop created by “AI pigs”. This will require much tighter processes for the selection of academic researchers (and this also surely applies to industry), along with absolute certainty and rigour over processes designed to penalise those who seek to dissemble. Put simply, all uses of AI should be declared (and on many occasions may be acceptable), and failure to do so should be accompanied by elimination.

Much more could be written (and indeed has already been writter by others) on these issues, but when people start assuming that universities and researchers welcome AI generated proposals or nominations then it is clear that the rot has already set in, and we need to quarterise it as soon as possible. We don’t have the antibiotics yet that can treat this infection!

A very illuminating article on AI.AI is indeed a double edged sword.I wish I could forward your article to our local press.Recently so much on AI.Yours trually explains so much about how it can be misused